Self-citations and academic assessments: Including the s-index as an additional metric thus provides important context to guide decisions based on academic value.

Scientists must compete for limited funding as well as for academic positions and recognition. Many factors contribute to success, but Hirsch’s h-index puts the emphasis squarely on citations (Hirsch, 2005). In such a system, it is perceived that more citations should lead to more funds, promotions, job security, et cetera. Hirsch’s formula rapidly became the dominant parameter informing academic ranking and resource allocation decisions, but it comes with limitations and it’s easy to manipulate. Yet most scholars and evaluation committees continue to embrace the h-index as the major way to keep score. This shows the metric has value – especially because it aims to make scientific assessment a level playing field – but we shouldn’t get carried away with how we use it.

In particular, the response to the obvious potential to game the h-index via excessive self-citation has been problematic. Inflating one’s papers with unnecessary citations of one’s previous work can easily boost the h-index and give a direct advantage to researchers in the competitive race for academic rewards (Bartneck & Kokkelmans, 2011).

Would that advantage be undeserved? Is the use of self-citations unfair? Does it taint competition?

Clearly, anyone can decide to recur to self-citation. In this respect it is hard to define it as an unfair practice. There is nothing wrong or shameful in building on one’s previous work. Actually this is itself a sign of a productive scientific path, and should thus not be treated as an academic misdemeanor. Still, the use of scientifically unnecessary self-citations is generally regarded as inappropriate.

Setting a clear line between an acceptable and a non-acceptable amount of self-citation is virtually impossible. Yet, given the present reliance on the h-index, we need to find a way to ensure that what it measures is not intentionally altered by the self-citing behaviors of individual scientists.

Calculating the h-index without including self-citations is one way to go. However, this “curated” h-index is inappropriate in instances where self-cites are the result of coordinated, sustained, leading-edge effort. Let’s appreciate rather than discard these legitimate citations. Furthermore, excluding does nothing to address the effect that once you cite yourself enough, others tend to follow. Ignoring the data gives a free pass to those using the strategy.

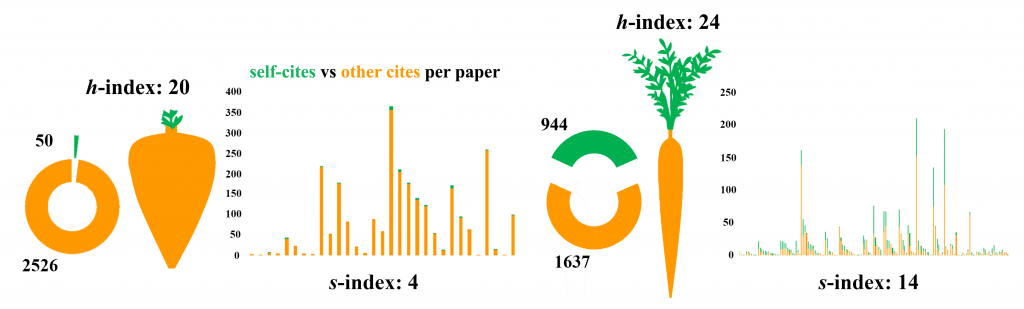

The best solution is to be transparent with self-citation reporting. Implementing this is easy as we only need to turn the h-index inward to create what we refer to as a self-citation index, or s-index: A scientist has an index of s if he or she has published s articles that have received at least s-citations (Flatt et al., 2017). Defining s similar to h makes it easy for people to understand, and importantly, pairing of h and s indices, unlike excluding, appreciates the worth of self-cites, while at the same time deterring excessive tendencies. With respect to the latter, reporting instead of excluding self-cites will allow us for the first time to see clearly how much the different scientific fields are resorting to self-citing, thereby making excessive behavior more identifiable, explainable, and accountable. Our aim is not to establish a numerical threshold of acceptable s-index. Rather, we propose a transparency-based approach whereby the s-index is shown alongside the h-index providing an indication of how self-citation contributes to the bibliometric impact of one’s work. The approach does not criminalize self-citation, but it offers a tool to help make sense of it.

Table 1: Curated h-index vs. s-index

|

Curated h-index |

s-index |

| Excludes all self-cites from h-index reporting | Pairs unrestrained h and s indices |

| Discards warranted self-citation | Appreciates self-citation that results from sustained, productive, leading-edge effort |

| Leaves room for citing oneself to attract outside cites | Promotes good citation habits |

| Serves to partially protect the h-index from gaming | Relies on peer review to score scientific impact and success |

Certainly some will question whether we really need a self-citation index (Davis, 2017). A concern being that the s-index can’t distinguish legit vs. illegit self-citation or a prolific author from a shameless self-promoter for that matter. Let’s consider. First, when aiming to distinguish legit vs. illegit self-referencing, there is no metric substitute for peer review. We believe that experts from the various disciplines will be able to handle the citation data responsibly and effectively. If not them, who? Second, when it comes to prolific authors, why unfairly punish them by discarding self-citations, especially in those cases where it may be the only data available? The same goes for early career researchers with fewer publications who legitimately use self-citations to make their work visible to other peers. It’s sensible to treat self-cites as signs of progress, rather than scrapping them as nonrelevant.

Exposing how the h-index can be prone to alteration, the s-index speaks in favor of other more qualitative parameters being considered for the assessment of academic value. Moreover, different disciplines may have developed different self-citation habits – each of which is perfectly acceptable within that discipline. The s-index thus leaves room for each disciplinary community to adjust how to consider this measure based on its own standards.

In the end, we must decide which path to take. We can attack the gaming problem by hacking away at the citation data to neatly remove all occurrences of self-cites. However, we and others are not happy with such handling. The other option would be to embrace visibility in a community of peers, which relies on expert judgment rather than curated scorekeeping. Regardless, if metrics are only as valid as the data behind them, then we do good to not hide any of the useful bits.

Figure 1: A snapshot of citation habits for two researchers growing in the same field. Once the s-index is factored in, similar h-indices correspond to very different “carrots”. The s-index, however, does not say whether thin or thick carrots are to be preferred. This choice will largely depend, as it were, on the recipe, that is, on the purpose of the assessment itself. Including the s-index as an additional metric thus provides important context to guide decisions based on academic value.

Trackbacks/Pingbacks